Network Poisoning

Before deep learning algorithms can be deployed in security-critical applications, their robustness against adversarial attacks must be put to the test. The existence of adversarial examples in deep neural networks (DNNs) has triggered debates on how secure these classifiers are. As artificial intelligence (AI) and its associated activities of machine learning (ML) and deep learning (DL) become embedded in the social fabric of every nation, maintaining the security of these systems and the data they use is paramount. The global cyber security market was estimated by IDC to be worth $107 billion in 2019, growing to $151 billion by 2023. ML poisoning could become a significant attack vector used by hackers to undermine AI systems and the organizations building businesses and processes around them.

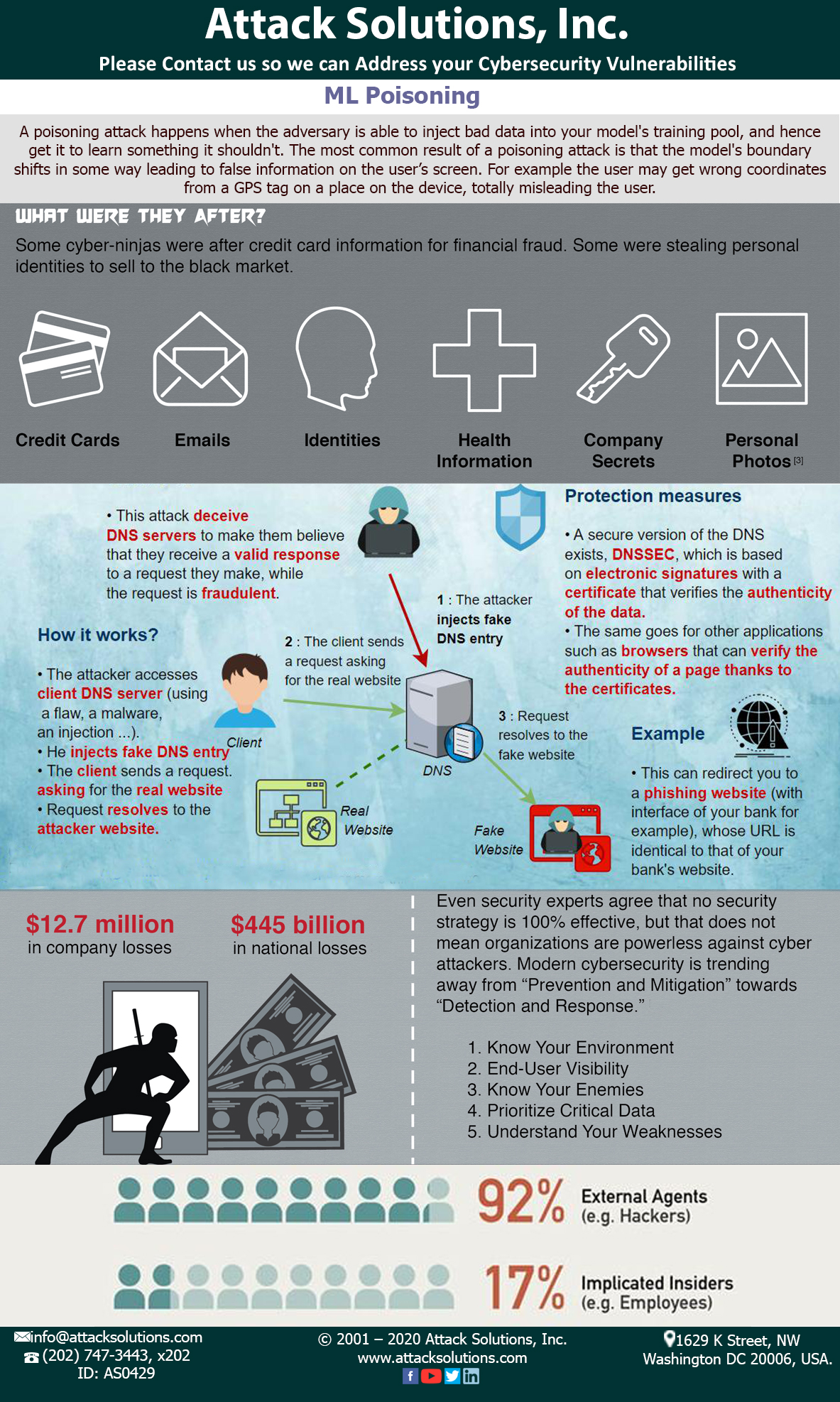

The most common result of a poisoning attack is that the model’s boundary shifts in some way leading to false information on the user’s screen. For example the user may get wrong coordinates from a GPS tag on a place on the device, totally misleading the user. There are different categories of attacks on ML models depending on the actual goal of an attacker (Espionage, Sabotage, Fraud) and the stages of machine learning pipeline (training and production), or also can be called attacks on algorithm and attacks on a model respectively. They are Evasion, Poisoning, Trojaning, Backdooring, Reprogramming, and Inference attacks. Evasion, poisoning and inference are the most widespread now.

Based on the adversarial capabilities of the attack, strategy of attack can be broadly classified as label modification, data injection, data modification and logic corruption. There are also two other attack types such as Backdoors and Trojans. The goal of this attack and types of attackers are different but technically they are quite similar to Poisoning attacks. Either of the cases the intention is to access the data set and modify the model’s behavior for a false projection. To pursue security in the context of an arms race it is not sufficient to react to observed attacks, hence here at Attack Solutions, we proactively anticipate the adversary by predicting the most relevant, potential attacks through a what-if analysis; this allows one to develop suitable countermeasures before the attack actually occurs, according to the principle of security by design.

Get a Quote

If you have questions or comments, please use this form to reach us, and you will receive a response within one business day. Your can also call us directly at any of our global offices.